BibTeX

@inproceedings{batalo-etal-2026-hype,

title = "Hype or not? Formalizing Automatic Promotional Language Detection in Biomedical Research",

author = "Batalo, Bojan and

Shimomoto, Erica K. and

Satav, Dipesh and

Millar, Neil",

editor = "Demberg, Vera and

Inui, Kentaro and

Marquez, Llu{\'i}s",

booktitle = "Proceedings of the 19th Conference of the {E}uropean Chapter of the {A}ssociation for {C}omputational {L}inguistics (Volume 1: Long Papers)",

month = mar,

year = "2026",

address = "Rabat, Morocco",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2026.eacl-long.328/",

doi = "10.18653/v1/2026.eacl-long.328",

pages = "6979--6992",

ISBN = "979-8-89176-380-7",

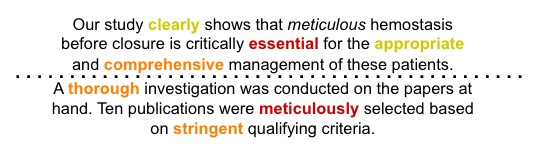

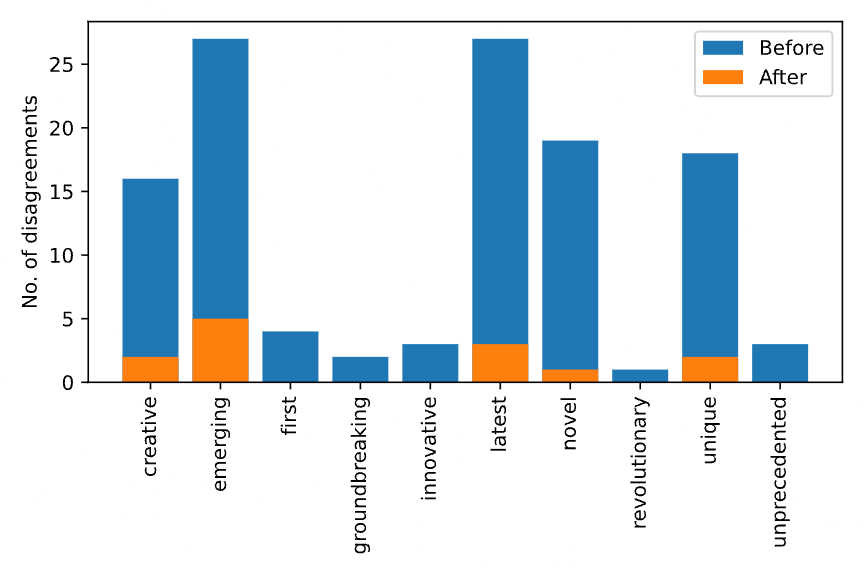

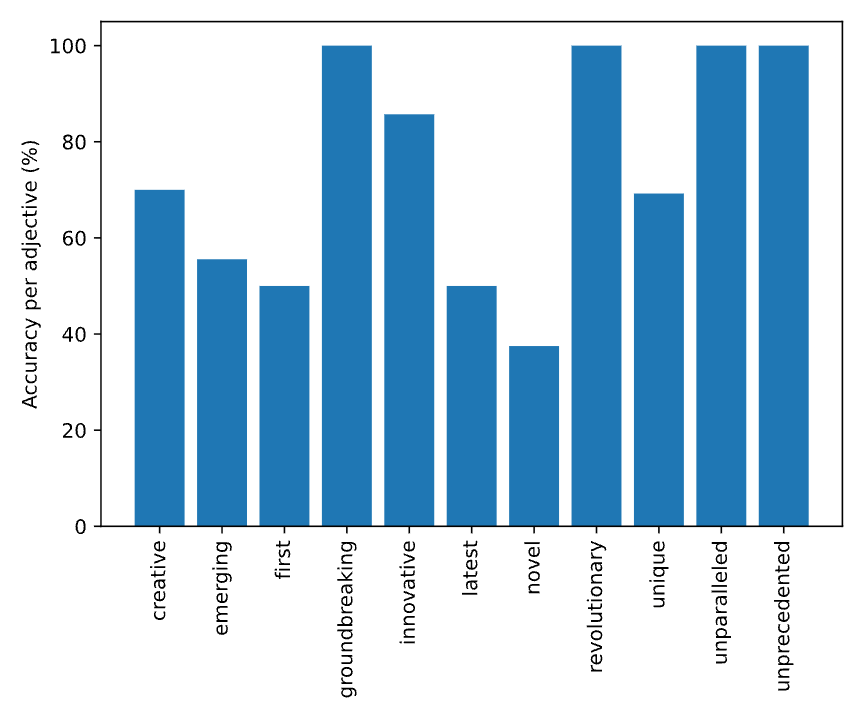

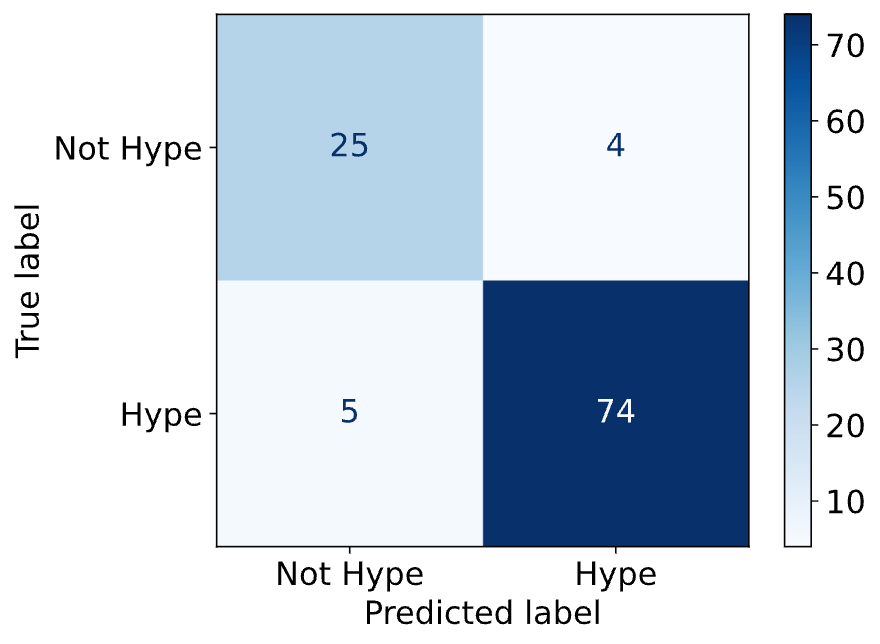

abstract = "In science, promotional language ({'}hype') is increasing and can undermine objective evaluation of evidence, impede research development, and erode trust in science. In this paper, we introduce the task of automatic detection of hype, which we define as hyperbolic or subjective language that authors use to glamorize, promote, embellish, or exaggerate aspects of their research. We propose formalized guidelines for identifying hype language and apply them to annotate a portion of the National Institutes of Health (NIH) grant application corpus. We then evaluate traditional text classifiers and language models on this task, comparing their performance with a human baseline. Our experiments show that formalizing annotation guidelines can help humans reliably annotate candidate hype adjectives and that using our annotated dataset to train machine learning models yields promising results. Our findings highlight the linguistic complexity of the task and the potential need for domain knowledge. While some linguistic works address hype detection, to the best of our knowledge, we are the first to approach it as a natural language processing task. Our annotation guidelines and dataset are available at https://github.com/hype-busters/eacl2026-hype-dataset."

}